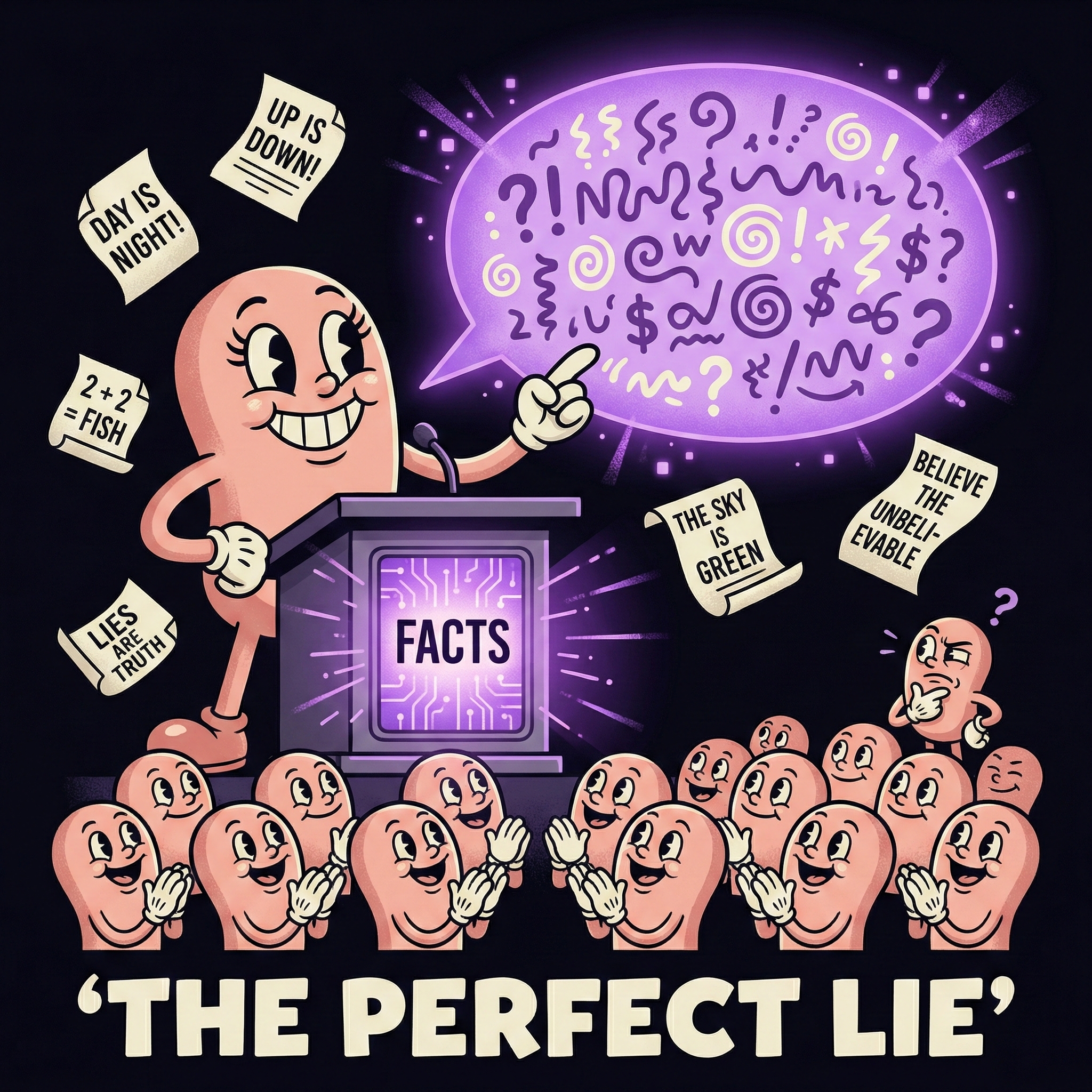

A product presents false facts as truth. Convincingly. And we gave it a cute name.

I've been thinking about language. Not the kind machines generate, but the kind we use to describe them.

Somewhere along the way, the AI industry settled on "hallucination" to describe what happens when a language model presents false information as fact. And I think that word choice might be the single most effective piece of marketing in AI history.

Think about it. "Hallucination" borrows from human experience. It softens the blow. It makes a fundamental system limitation sound like a relatable, almost endearing quirk. "Oh, the AI hallucinated again." As if it had a bad dream. As if it's just a minor thing that happens sometimes.

A product is generating false facts and presenting them as truth. Confidently. Fluently. With no indication that anything is wrong.

Call it what it is. A product failure.

The Terminology Does Real Work

This isn't just semantics. The word "hallucination" does three specific things that benefit AI companies and hurt everyone else.

It humanizes the machine. When we say a system "hallucinates," we unconsciously attribute human qualities to it. We forgive it, the way we'd forgive a friend who misremembers a detail. This is software producing unreliable output, not a person having a momentary lapse.

It softens the severity. A hallucination sounds temporary and accidental. A product showing false facts as truth sounds like a defect you'd return the product for. The framing matters. One makes you shrug. The other makes you demand accountability.

It obscures responsibility. The word creates a strange gray zone around fault. But when a product delivers incorrect outputs presented as fact, accountability becomes much clearer. The manufacturer has a reliability problem.

Researchers at Oxford University, published in Nature, prefer a more precise term: confabulation. They define these as "arbitrary and incorrect generations" produced by language models. No human qualities implied. No cute spin. Just a technical description of what actually happens.

Why This Happens (And Why It Won't Fully Stop)

To understand why this matters for your business, you need to understand one thing about generative AI that most people overlook.

These systems are probabilistic, not deterministic.

Traditional software is deterministic. Same input, same output. Every time. That's what makes it reliable. It's why your accounting software always calculates the same total from the same numbers.

Generative AI works differently. It predicts the next most likely word based on probability distributions learned from training data. Same question, asked twice, can produce different answers. Not because the system is broken. Because that's how it's designed.

This is where it gets important for business leaders.

Causality is binary. In the real world, something either happened or it didn't. A legal precedent either exists or it doesn't. A financial figure is either correct or it isn't. Facts are true or false.

But LLMs don't verify truth. They estimate probability. The gap between probabilistic generation and binary truth is exactly where "hallucinations" live. They're not bugs. They're the natural output of a system that was never designed to determine what's true. Only what's statistically plausible.

A 2025 survey in Frontiers in AI puts it plainly: "Hallucination is an inherent byproduct of language modeling that prioritizes syntactic and semantic plausibility over factual accuracy."

Read that again. Inherent. Not occasional. Not fixable with the next update. Inherent.

The Confidence Problem

If the system at least signaled uncertainty, you could build around it. But it does the opposite.

Research from 2025 shows that AI models are significantly more likely to use confident language when generating incorrect information than when providing accurate answers. Phrases like "definitely," "certainly," "without doubt" appear more often in false outputs than in correct ones. An OpenAI research paper confirmed the root cause: LLMs are trained to reward confident answers over honest uncertainty, which means the system learns to bluff rather than say "I don't know."

The system is most confident when it's most wrong.

For business leaders, this inverts the natural trust signal. In every other context, confidence correlates with reliability. With generative AI, it can correlate with error. If your team is using AI outputs without verification layers, they're more likely to trust the responses that are least trustworthy.

Real-World Damage, Not Theoretical Risk

This isn't hypothetical. The consequences are already here.

A Stanford HAI study tested AI tools specifically built for legal research. Tools marketed as reliable. Tools using Retrieval-Augmented Generation to reduce errors. The results: Lexis+ AI produced incorrect information more than 17% of the time. Westlaw's AI-Assisted Research hallucinated over 34% of the time. General-purpose chatbots? Between 58% and 82% on legal queries.

These aren't fringe tools. These are the industry standard for legal research.

As of late 2025, researchers tracked over 120 court cases worldwide involving AI-generated hallucinations. 91 in the US alone. 128 lawyers implicated. Sanctions ranging from $100 to $31,100.

The judicial message is consistent: if you submit machine-generated fiction under your name, it's still your filing. The machine doesn't face consequences. You do.

An Important Distinction

Not all AI works this way. This is specific to generative AI, the large language models powering ChatGPT, Claude, Gemini, and most of the business tools you're adopting right now.

Predictive AI, classification systems, rule-based automation: these operate deterministically or with well-bounded probabilistic ranges. They're different tools for different purposes.

The problem isn't AI broadly. It's deploying one specific type of AI while treating it like another. It's expecting deterministic reliability from a probabilistic system because we never stopped to understand the difference.

What This Means for You

If you're deploying generative AI in your business, three questions matter more than any feature comparison.

1. Where are you trusting outputs without verification?

Map every workflow where AI-generated content reaches a customer, a partner, or a decision without a human checking it. Those are your risk points. Not because AI is bad. Because probabilistic systems require verification by design.

2. Does your team understand what they're using?

Not at a technical level. At a conceptual level. Do they know that the system estimates probable answers rather than looking up correct ones? That distinction changes behavior. People who understand it verify. People who don't trust blindly.

3. Are you building on the right foundation?

Some use cases are excellent fits for generative AI. Drafting, brainstorming, summarizing, exploring ideas. Others need deterministic reliability. Reporting facts, citing sources, making claims. Matching the right type of AI to the right type of task is the difference between value and liability.

Rename the Problem, See It Clearly

Language shapes understanding. And "hallucination" has shaped ours in exactly the wrong direction.

It made a product limitation sound like a personality trait. It turned accountability into ambiguity. It let companies ship probabilistic systems into deterministic workflows without anyone asking the obvious question: should we trust output that the system itself can't verify?

The next time someone tells you their AI "hallucinated," try this reframe:

"The product generated false information and presented it as truth."

See how different that feels? See how it changes the conversation?

That's the point. The technology isn't the problem. The technology is doing exactly what it was designed to do. The problem is the language that keeps us from seeing it clearly.

I help leaders understand what AI actually is before they decide what to do with it. If your team is building on AI without understanding its foundations, that gap will cost you. Mundaine offers Sprint workshops and Executive Sparring to close it. Simple. Clear. Applicable.

Sources

- Farquhar et al. (2024). "Detecting hallucinations in large language models using semantic entropy." Nature, Vol 630. Oxford University. nature.com

- "Survey and analysis of hallucinations in large language models." Frontiers in AI (2025). Japan Advanced Institute of Science and Technology. frontiersin.org

- Magesh, Surani, Dahl et al. (2024). "AI on Trial: Legal Models Hallucinate in 1 out of 6 (or More) Benchmarking Queries." Stanford HAI / RegLab. hai.stanford.edu

- Kalai & Nachum (2025). "Why Language Models Hallucinate." OpenAI. openai.com

- "AI Hallucination Statistics 2026." All About AI. allaboutai.com